Garbage Data In, Garbage Data Analysis Out

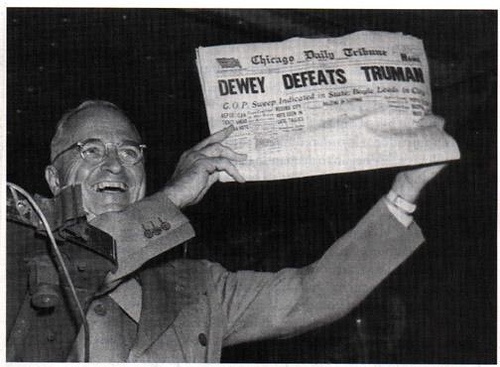

Enough time has passed, we can talk about the election now, right? Good. So what the heck happened? A lot of pollsters and data scientists made a lot of predictions that, when all the votes were counted, proved wildly inaccurate.Like Nate Silver found out, shifting demographics, non-representative sampling patterns, and the inherent flaws of phone polling all conspired to get a little egg on the face of nearly every political pollster in the game.

And hey, it happens. Perhaps not always on such a grand, public, and consequential scale, but flawed analyses emerging from suspect data is an unfortunate fact of research. That’s because analytical tools like algorithms and regression models cannot overcome flawed methodologies—it’s like what grade school teachers tell their student everyday: “garbage in, garbage out.”

Data Collection Companies Can Make These Same Mistakes

Such mistakes are not just found in political modeling, of course. This chain reaction—a poor methodological model produces inaccurate data leading to erroneous analysis—is endemic to market research as well. The problem is, oftentimes, people don’t even notice.

Market research clients only see the final product, often an executive summary, key findings, and recommendations, but rarely get any deeper. But this summary did not just magically appear as one of three wishes granted by a business genie. Market research products are the result of carefully designed methodologies, precise field execution and data collection, and rigorous and disinterested analysis. Without accuracy and accountability at every stage of the process, inaccuracies are not just possible, they are a fact.

How to Ensure Accuracy in Data Collection

So how do you ensure you aren’t putting garbage data into your models and getting garbage out? There are several steps all credible market research companies must take in their data collection methodologies, including:

- Vet a dynamic field agent database. A robust database that rewards accuracy and timeliness while eliminating agents unwilling or unable to provide quality data assures you that the rawest numbers put into your models are as close to perfect as possible.

- Validate research whenever possible against existing client data. There should always be checks and balances within market research programs, and validating independent research against existing client data provides this opportunity

- Maintain a dedicated and independent Quality Assurance team. This is paramount. Different silos within market research firms have differing and sometimes conflicting priorities related to field completion, client deadlines, and cost. Giving a QA team the power and independence necessary to push back against bad data is thus mandatory—otherwise quality becomes slave to other concerns.

- Reject cold-call phone call polling as unreliable: Cellphones killed the phone survey. Plain, simple, true.

There are many more steps and checks to implement along the way, but this should get you started.

Of course, this is all just on the front end—data collection. There’s a whole ‘nother side to this coin, data analysis and reporting. More to come on that one - Stay tuned for Part 2!